The DALL·E Revolution: How AI Is Rewriting the Rules of Visual Creativity

What Is DALL·E?

Have you ever described a scene to a friend and they immediately pictured it in their head? DALL·E does the same thing — except instead of imagining it, it draws it for you, instantly and completely from scratch.

DALL·E is an artificial intelligence tool created by OpenAI that turns written descriptions into brand new images. You type a sentence — any sentence — and within seconds it produces an original image that has never existed before.

The simplest way to understand it:

Imagine a brilliantly talented artist who sits beside you 24 hours a day. You describe anything you can imagine, and they immediately create it perfectly. DALL·E is that artist — except it lives in your computer, works in seconds, never gets tired, and can draw anything.

The name DALL·E is a playful mashup of two famous names: Salvador Dalí, the Spanish surrealist painter known for imaginative, dreamlike artwork, and WALL·E, the lovable robot from the Pixar film. Put them together, and you get DALL·E — a creative, imaginative machine that makes art.

Does DALL·E copy or search for existing images?

No, DALL·E does not copy, download, or search for existing images from the internet. Every single image it produces is created from scratch, completely unique, and has never existed anywhere before. It generates, not retrieves.

KEY TAKEAWAYS

- DALL·E is an AI tool that creates original images from written descriptions.

- It was made by OpenAI — the same company behind ChatGPT.

- Every image it creates is brand new and has never existed before.

- The name combines Salvador Dalí (artist) and WALL·E (Pixar robot).

- You do not need any artistic skill to use it. You only need words.

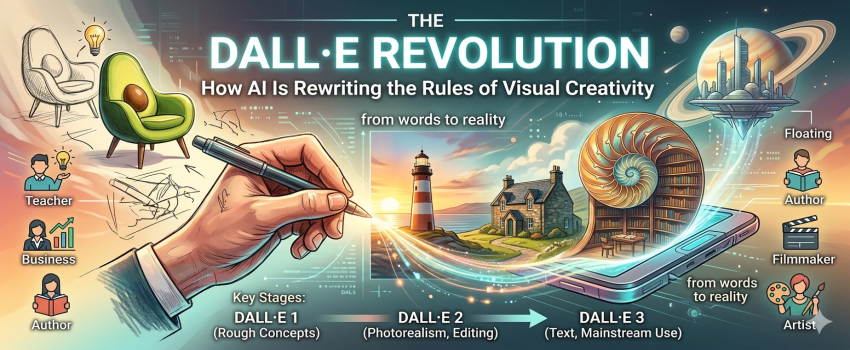

How DALL·E Has Evolved Over Time

Jan 2021: DALL·E 1 — The First Step

Apr 2022: DALL·E 2 — A Major Leap

Oct 2023: DALL·E 3 — The Version Millions Use Today

- DALL·E 1 (2021): Proved AI could understand and combine creative concepts. Groundbreaking but rough.

- DALL·E 2 (2022): Photorealistic quality. Editing tools added. Used seriously by creative professionals.

- DALL·E 3 (2023): Current version. Inside ChatGPT. Understands complex language. Renders text in images.

- Each version did not just improve quality — it expanded what was even possible.

How Does DALL·E Actually Create Images?

This is the question most people wonder about. How does a computer go from reading a sentence to drawing a perfect picture? It sounds like magic. It is not. There is a clear, logical process behind it — and once you understand it, the whole thing makes complete sense.

A helpful everyday comparison:

Imagine a song slowly rising out of complete silence. At first there is only noise. Then, gradually, you begin to hear a melody. It becomes clearer and clearer with every passing second until the full song is there. DALL·E creates images in exactly the same way — starting from visual noise and refining it, step by step, until a clear picture emerges.

HOW IT WORKS — STEP BY STEP

Step 1: You type your description (called a prompt), for example: “a lighthouse at sunrise painted in watercolour style.”

Step 2: DALL·E reads and analyses every word. It understands what a lighthouse looks like, what sunrise colours are, and what the soft flowing texture of watercolour painting feels like visually.

Step 3: The system starts with a completely random screen of visual noise — like static on an old television set.

Step 4: Guided by your description, it begins removing noise and adding structure, step by step, hundreds of times.

Step 5: After hundreds of tiny refinements, a sharp, complete image appears that matches your original description.

This technique is called a diffusion model. The word 'diffusion' describes how something spreads and slowly settles into a defined shape — like a drop of ink spreading through water and gradually becoming a clear pattern. DALL·E does this with pixels.

Key Terms Explained in Plain Language

Diffusion Model: The core technique. DALL·E starts with random noise and gradually clears it into a focused image, guided by your words. Think of developing a photograph, but in reverse.

Prompt: The written description you type to DALL·E. The more specific and detailed your prompt is, the more accurate and impressive the result will be.

CLIP: A system that connects word meanings to visual ideas. It understands that “mournful” looks visually different from “joyful,” even in a painting. It bridges language and imagery.

Neural Network: The underlying technology. A computer system trained on millions of image examples, loosely inspired by how human brains process and recognise patterns.

Inpainting: Editing a specific region inside an existing image while leaving the rest unchanged. Like using a precise eraser and redrawing only one part.

Outpainting: Extending an image beyond its original edges. You can take a portrait and expand it to reveal the full room, garden, or landscape around the subject.

Can DALL·E understand any style of art?

Yes. It has been trained across a vast range of artistic styles and mediums. You can request oil painting, watercolour, pencil sketch, photography, cartoon, pixel art, linocut, ink wash, and hundreds more. It understands not just what a style looks like but the texture, colour palette, and mood it carries.

KEY TAKEAWAYS

- DALL·E uses a diffusion model — it starts from noise and refines it into a clear image.

- The process happens in hundreds of tiny refinement steps guided by your words.

- CLIP connects word meaning to visual ideas, ensuring language and image stay in sync.

- The more descriptive and specific your prompt, the more accurate the result.

- It understands hundreds of art styles, mediums, lighting types, and compositional directions.

How to Write a Great Prompt

The prompt is the instruction you give DALL·E. Think of it as giving directions to that talented imaginary artist. The clearer and more detailed your directions are, the better and more impressive the result will be.

A weak prompt: “a house” — produces a generic, forgettable result.

A strong prompt: “a stone cottage surrounded by wildflowers in Scotland, photographed at golden hour with soft misty mountains in the background.” — produces something beautiful and specific.

THE FORMULA FOR A GREAT PROMPT

SUBJECT + SETTING + ART STYLE + MOOD + LIGHTING

Example: A young girl (subject) reading by a river in autumn (setting),

illustrated in classic storybook style (art style), peaceful and warm (mood),

with soft golden afternoon light filtering through the trees (lighting).

Every detail you add gives DALL·E more to work with. More detail = better result.

6 Ready-to-Use Prompt Examples with Explanations

NATURE & SURREALISM

“A giant library inside a hollow nautilus shell, illuminated by bioluminescent jellyfish, painted in rich oil colours with dramatic chiaroscuro lighting”

Why it works: Combines a familiar setting (library) with an imaginative location (nautilus shell). Specifies the light source (jellyfish), art medium (oil colours), and lighting style (chiaroscuro). DALL·E has precise instructions at every level.

ARCHITECTURE

“A skyscraper grown entirely from living ancient trees with cantilevered glass floors and waterfalls cascading between branches, photographed at golden hour with hyperrealistic detail”

Why it works: The subject is vivid and specific. Impossible details (glass floors, waterfalls) are described precisely. The camera instruction (golden hour, hyperrealistic) tells DALL·E exactly the visual tone and finish to aim for.

SCIENCE & MACRO

“The inside of a single water droplet magnified 10,000 times, showing crystalline lattice structures in iridescent blues and golds, in the style of a scientific illustration”

Why it works: Gives a specific scale (10,000 times), specific colours (blue and gold), and a defined visual format (scientific illustration). No guesswork. Every element is stated clearly.

FASHION & PORTRAIT

“A fashion photograph of a woman wearing a gown constructed from pressed autumn leaves and morning dew, Vogue editorial lighting, shot on medium format camera, shallow depth of field”

Why it works: The camera type, magazine reference (Vogue), and technical photography terms (medium format, shallow depth) instruct DALL·E to produce a genuinely professional-looking result.

CONCEPT & EMOTION

“Human emotions rendered as a physical landscape: sadness as deep ocean trenches, joy as sunlit alpine peaks, nostalgia as amber valleys at dusk, painted in the style of a classical landscape painting”

Why it works: Translates abstract feelings into concrete visual metaphors. Each emotion gets its own colour and geography. The art style reference anchors the overall look. Creative and surprisingly achievable.

HISTORY & CULTURE

“A 1970s science fiction poster of a floating city above Saturn, designed as a vintage travel advertisement, warm amber and teal palette, halftone print texture, retro typography”

Why it works: The decade (1970s), design format (travel poster), colour palette, and texture are all stated. DALL·E can reconstruct a very specific historical aesthetic when you give it this much detail.

KEY TAKEAWAYS

- Use the formula: Subject + Setting + Art Style + Mood + Lighting.

- Naming a specific art medium (oil painting, watercolour, photography) shapes the result dramatically.

- Specify impossible or imaginative subjects freely — DALL·E will illustrate anything.

- Camera language (medium format, golden hour, shallow depth of field) produces professional-looking results.

- Do not aim for perfection on the first try. Generate, review, refine your prompt, and try again.

Why Does DALL·E Matter?

DALL·E is not just an interesting technical tool. It is actively changing how people work, learn, build businesses, and express ideas. Its impact reaches far beyond the world of professional design.

For Teachers

Create custom illustrations for lessons. Visualise historical moments. Make learning materials that are specific to your students’ needs.

For Business Owners

Design marketing graphics, social posts, product concepts, and branding visuals without the cost and time of hiring a designer.

For Authors & Writers

Generate cover concepts, character portraits, scene illustrations, and world-building imagery to bring your stories to life.

For Filmmakers

Visualise scenes, mood boards, and character designs before a single day of production begins. Save time and budget at the concept stage.

For Everyday People

Create personalised artwork, gifts, greetings, and home decor without needing any artistic training or expensive software.

For Professional Artists

Explore ten visual directions in an afternoon. Use AI for rapid prototyping while your human judgment directs the final creative vision.

The right comparison from history:

When cameras were invented in the 1800s, people feared that painters would have no more work. The opposite happened. Photography freed painters to explore entirely new movements — impressionism, cubism, abstract expressionism. The tool did not end creativity. It launched a new era of it. DALL·E is doing the same thing today.

Will DALL·E replace human artists and designers?

No. But the role is shifting. AI handles technical execution faster than any human can. However, it cannot replace human judgment, cultural sensitivity, emotional understanding, or genuine original thinking. The best creative results come from humans directing AI with clear purpose and vision. The skill of knowing what you want and describing it well has become more valuable than ever.

KEY TAKEAWAYS

- DALL·E makes visual creativity accessible to teachers, business owners, authors, and everyday people.

- It accelerates the creative process for professionals without replacing their judgment or vision.

- Like the camera before it, it does not end human creativity — it opens entirely new creative possibilities.

- The most powerful results always come from human imagination directing the AI tool.

- The skill of communicating ideas clearly — through well-written prompts — is now a genuinely valuable ability.

What Does the Future Look Like?

DALL·E today is impressive. But we are still in the earliest chapter of this technology. The next few years will bring changes that are hard to fully predict. Here is what we can already see coming.

THREE BIG DEVELOPMENTS ALREADY UNDERWAY

1. VIDEO GENERATION — AI tools are already moving from still images to video. Soon you will type a description of a scene and watch a short film clip generated in seconds.

2. REAL-TIME CREATION — Images appear and update as you type each word, allowing live, interactive visual thinking rather than waiting for a result.

3. 3D WORLD BUILDING — You describe a space and walk through it as if in a video game. Architecture, interior design, and virtual environments created from a sentence.

One thing is already certain: the people who learn to work with these tools clearly and thoughtfully will have a significant creative and professional advantage. The bottleneck is no longer technical ability. It is imagination and the skill to describe what you want with precision.

The broader perspective:

We are living through a moment where the ability to make images is no longer limited by artistic training, budget, or time. For the first time in history, anyone with an idea and the words to describe it can create world-class visual work. That is a genuinely historic shift in human creative capability.

KEY TAKEAWAYS

- AI image generation is already moving toward video, real-time creation, and 3D world building.

- The most important skill is knowing what you want and being able to describe it precisely.

- Human creativity, emotional depth, and cultural judgment remain irreplaceable.

- Learning to use these tools is one of the most valuable creative skills of this decade.

- The future belongs to people who combine their imagination with the power of AI tools.

Related to this

Let's Discuss Your Project